The US government has asked leading artificial intelligence companies for advice on how to use the technology they are creating to defend airlines, utilities and other critical infrastructure, particularly from AI-powered attacks.

The Department of Homeland Security said Friday that the panel it’s creating will include CEOs from some of the world’s largest companies and industries.

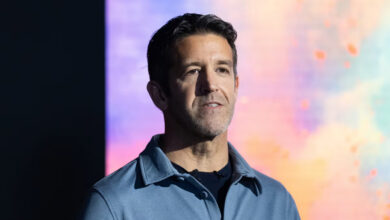

The list includes Google chief executive Sundar Pichai, Microsoft chief executive Satya Nadella and OpenAI chief executive Sam Altman, but also the head of defense contractors such as Northrop Grumman and air carrier Delta Air Lines.

The move reflects the US government’s close collaboration with the private sector as it scrambles to address both the risks and benefits of AI in the absence of a targeted national AI law.

The collection of experts will make recommendations to telecommunications companies, pipeline operators, electric utilities and other sectors about how they can “responsibly” use AI, DHS said. The group will also help prepare those sectors for “AI-related disruptions.”

“Artificial intelligence is a transformative technology that can advance our national interests in unprecedented ways,” said DHS Secretary Alejandro Mayorkas, in a release. “At the same time, it presents real risks — risks that we can mitigate by adopting best practices and taking other studied, concrete actions.”

Among the panel’s other participants are the CEOs of technology providers such as Amazon Web Services, IBM and Cisco; chipmakers such as AMD; AI model developers such as Anthropic; and civil rights groups such as the Lawyers’ Committee for Civil Rights Under Law.

It also includes federal, state and local government officials, as well as leading academics in AI such as Fei-Fei Li, co-director of Stanford University’s Human-centered Artificial Intelligence Institute.

The 22-member AI Safety and Security Board is an outgrowth of a 2023 executive order signed by President Joe Biden, who called for a cross-industry body to make “recommendations for improving security, resilience, and incident response related to AI usage in critical infrastructure.”

That same executive order also led this year to government-wide rules regulating how federal agencies can purchase and use AI in their own systems. The US government already uses machine learning or artificial intelligence for more than 200 distinct purposes, such as monitoring volcano activity, tracking wildfires and identifying wildlife from satellite imagery.

Meanwhile, deepfake audio and video, which use AI to push fake content, have emerged as a key concern for US officials trying to protect the 2024 US election from rampant mis- and disinformation. A fake robocall in January imitating Biden’s voice urged Democrats not to vote in New Hampshire’s primary, sounding alarms among US officials focused on election security. A New Orleans magician told CNN that a Democratic political consultant hired him to make the robocall. But there is concern that foreign adversaries like Russia, China or Iran could exploit the same technology.

“It is a risk that is real,” Mayorkas told reporters on Friday while discussing the AI advisory board. “We are seeing adverse nation-states engaged and we work to counter their efforts to unduly influence our elections.”